Overview

This project aims to detect and translate American Sign Language (ASL) fingerspelling into text using transfer learning. The goal is to make technology more accessible for the Deaf and Hard of Hearing community by enabling faster and more accurate text entry through fingerspelling.

Motivation

Voice-enabled assistants are inaccessible to over 70 million Deaf individuals worldwide and 1.5+ billion people with hearing loss. Fingerspelling, a component of ASL, provides a promising alternative for text entry on mobile devices. Many Deaf smartphone users can fingerspell words faster than they can type on mobile keyboards.

Objective

The goal of this project is to detect and translate ASL fingerspelling into text based on a large dataset of over three million fingerspelled characters produced by over 100 Deaf signers. This helps in making technology more accessible for the Deaf and Hard of Hearing community.

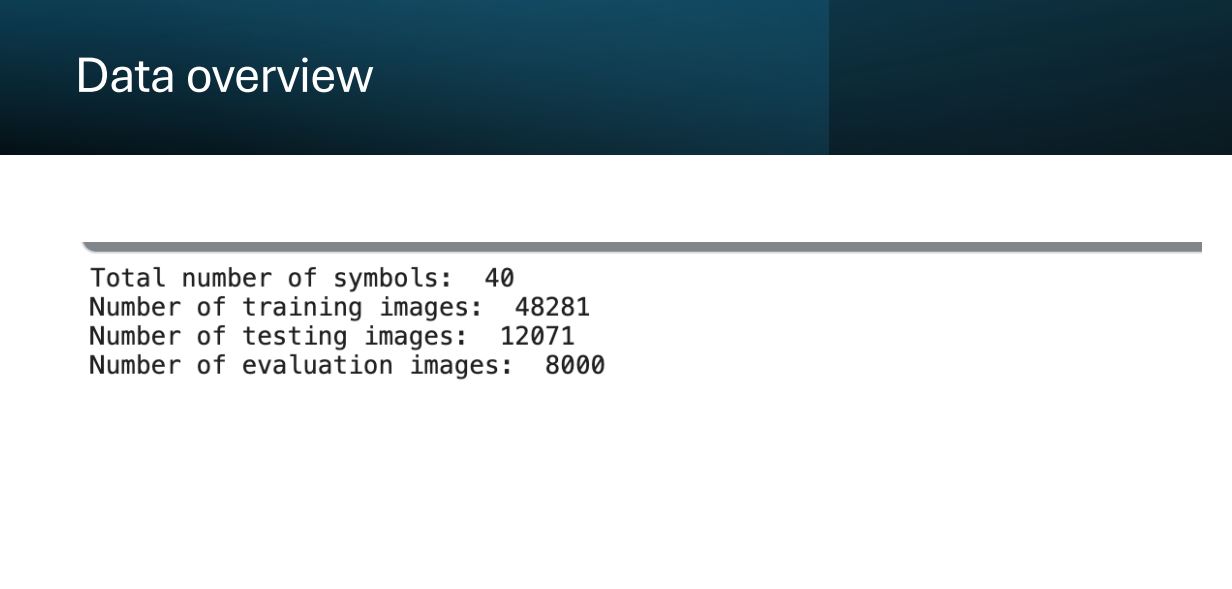

Data Overview

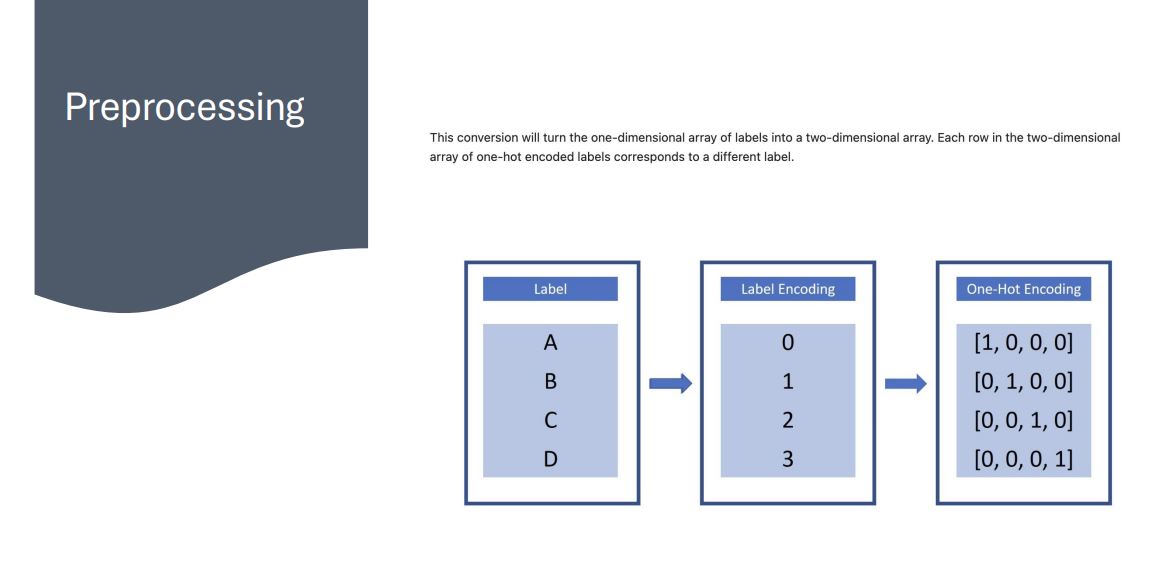

- Utilized a large dataset of fingerspelled characters captured via the selfie camera of a smartphone with various backgrounds and lighting conditions.

- Data preprocessing involved normalization techniques to remove distortions caused by lights and shadows in images.

Pre-trained Model

- Leveraged pretrained models such as VGG16 and ResNet50, which contain learned features that are transferable to our dataset.

- Using pretrained models saves time and computational resources while benefiting from previously learned features.

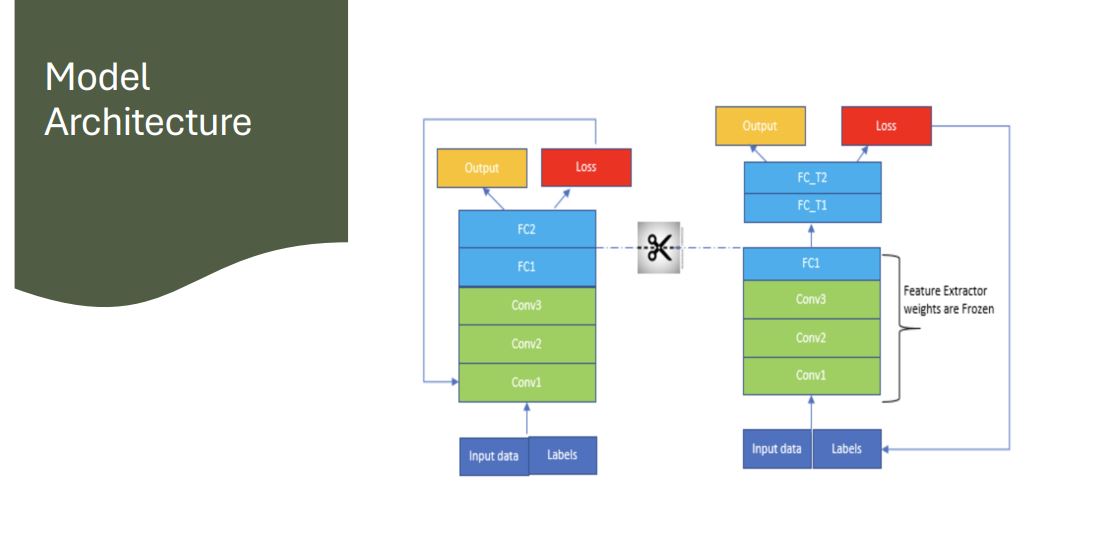

Model Architecture

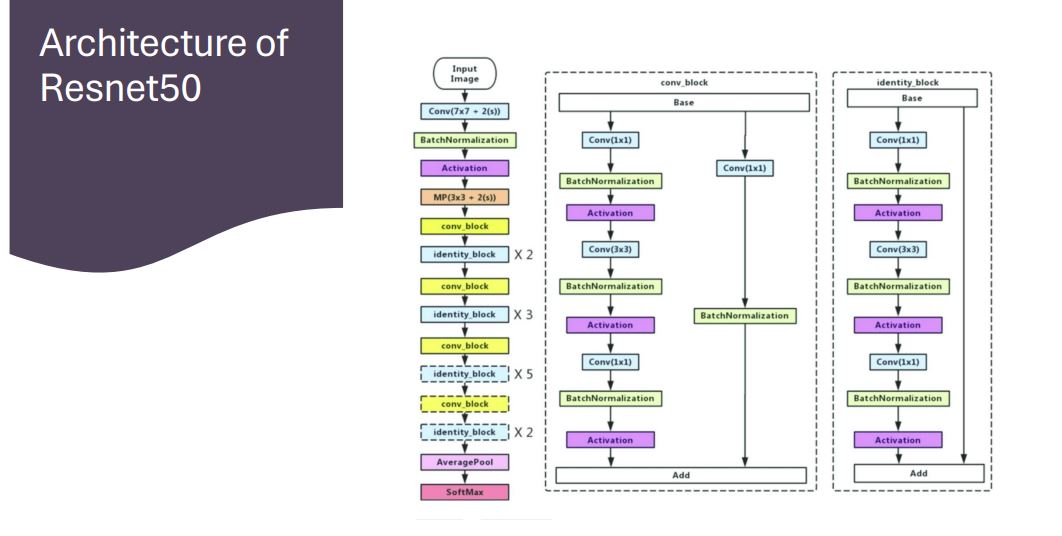

- Implemented VGG16 and ResNet50 models, focusing on their unique features and architectures.

Models Used

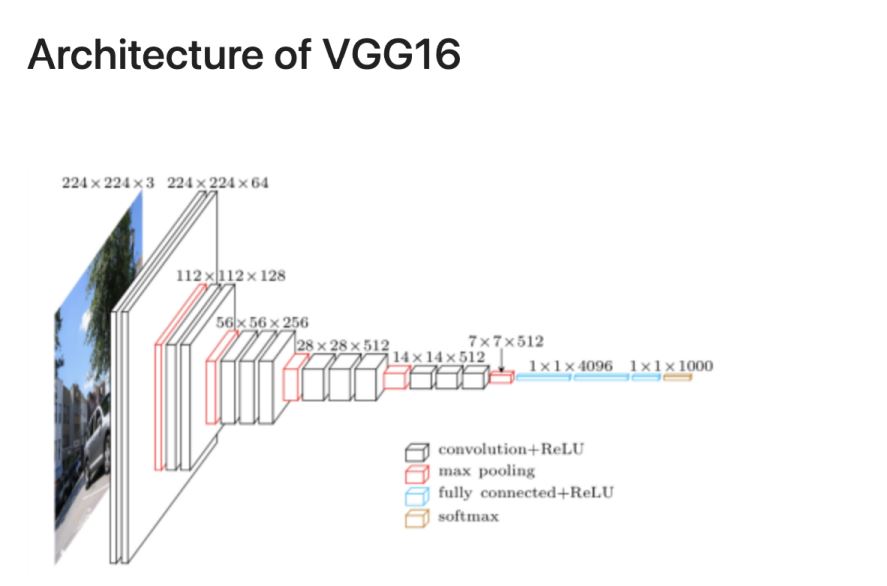

- VGG16: A convolutional neural network architecture with 16 layers, focusing on having convolution layers of 3x3 filter and using same padding and max-pool layers.

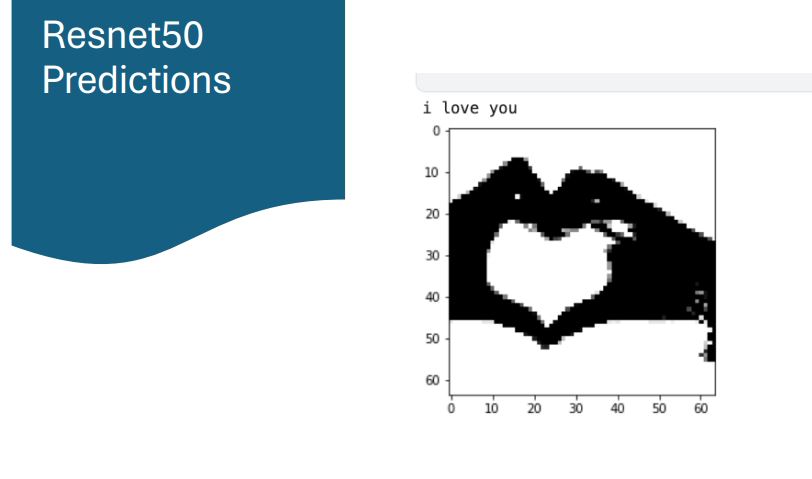

- ResNet50: A convolutional neural network architecture with 50 layers, utilizing residual learning blocks to alleviate the vanishing gradient problem.

Training

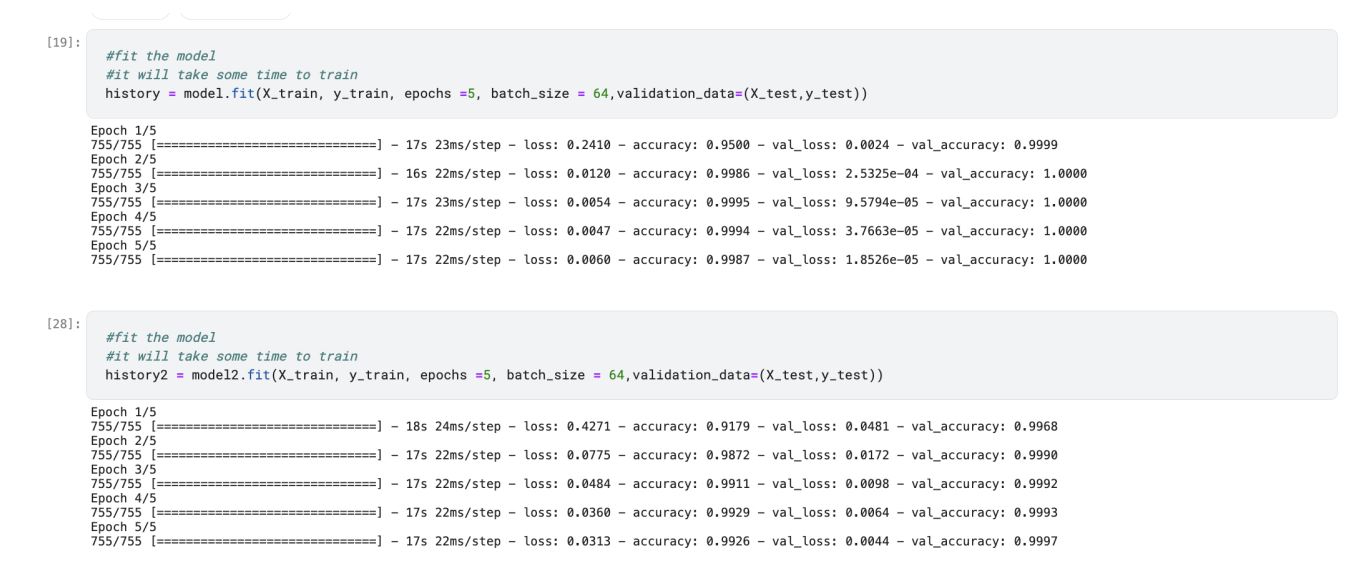

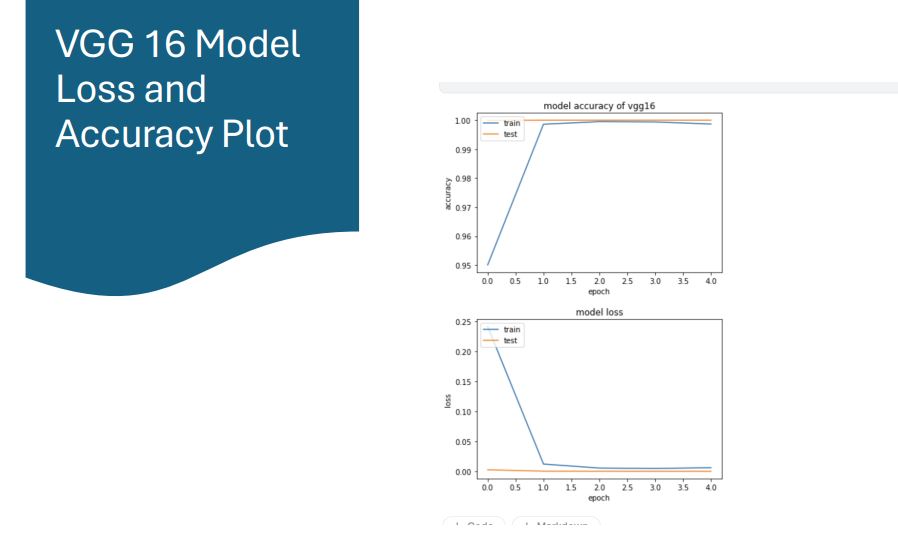

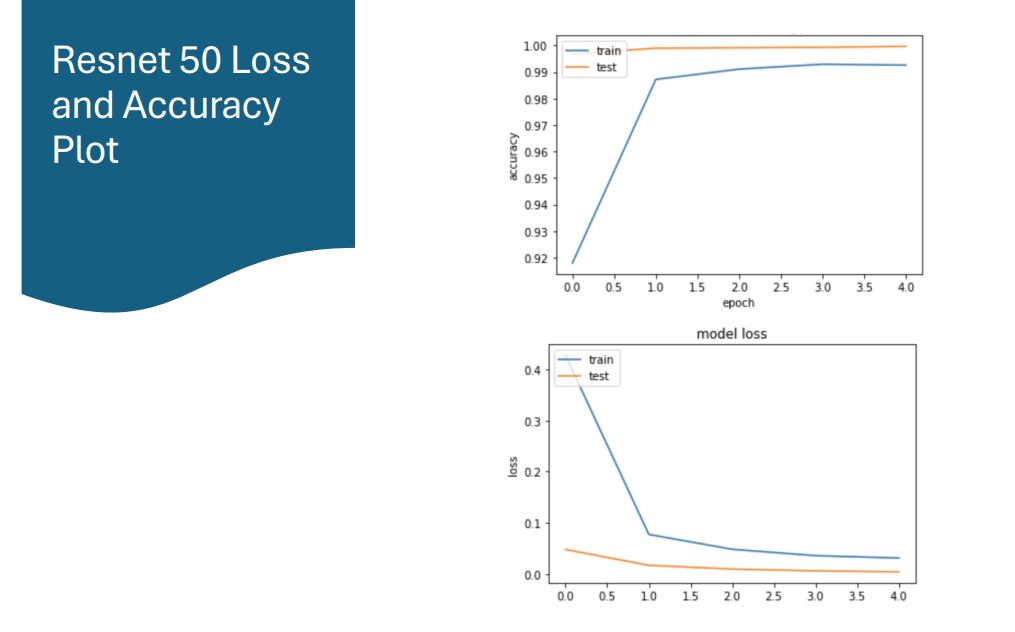

- Conducted training and evaluation, with loss and accuracy plots generated for both models.

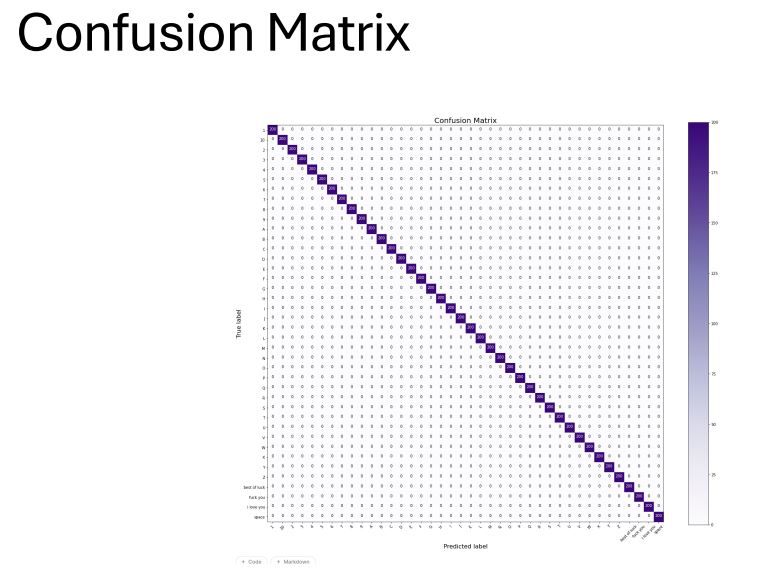

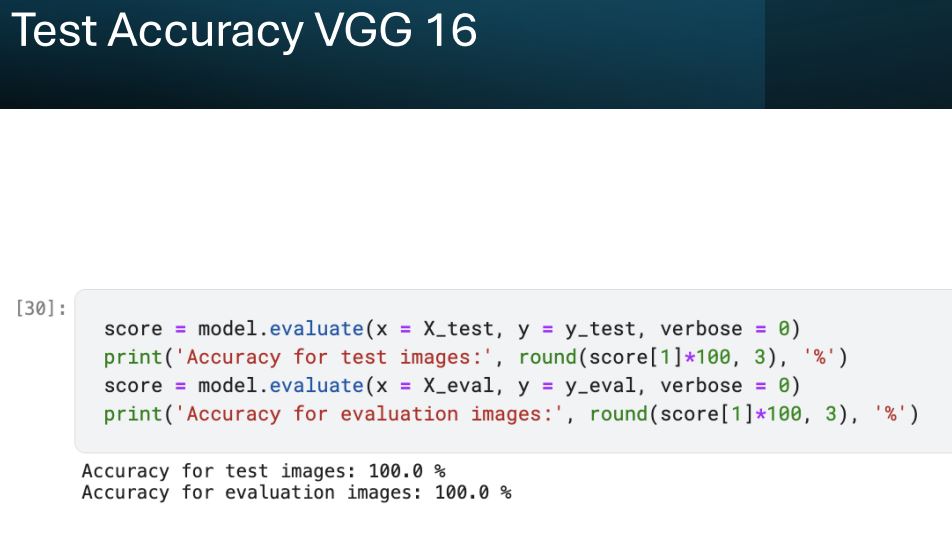

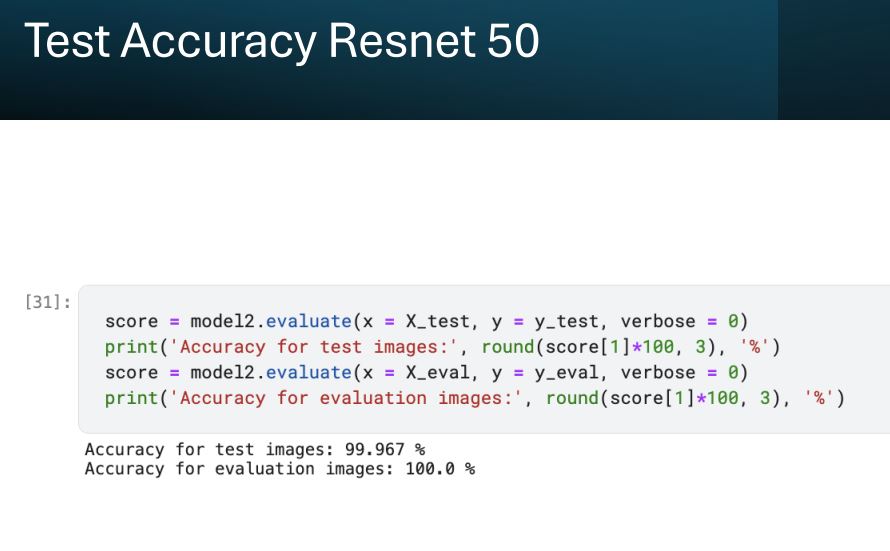

Results

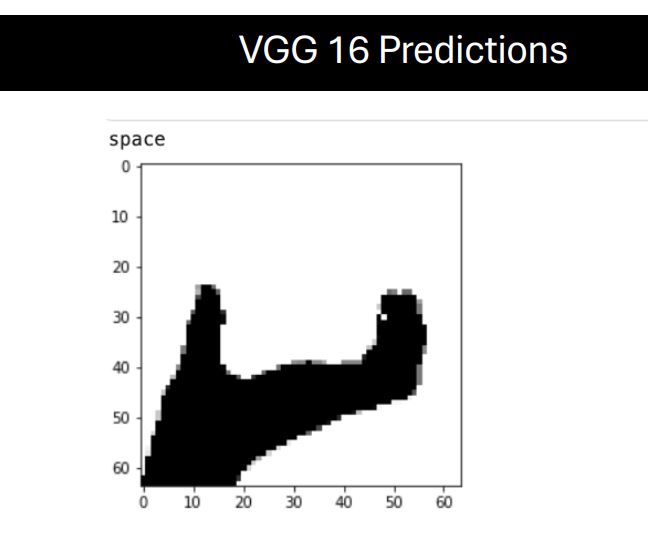

- VGG16: Achieved significant accuracy in recognizing ASL fingerspelling.

- ResNet50: Demonstrated robust performance in sign language recognition tasks.

Future Scope

- Develop a cutting-edge real-time web application for sign language recognition.

- Enable camera access to capture live sign language gestures and utilize machine learning models for real-time processing.

- Display translated text output directly on the web application interface, enhancing accessibility and communication for individuals with hearing impairments.

Impact

- Transforming the way people communicate and interact online.

- Empowering individuals with disabilities to participate more fully in digital spaces.

This project demonstrates the potential of using advanced machine learning models and transfer learning to address significant accessibility challenges faced by the Deaf and Hard of Hearing community.